Stephen James Krol

Research Fellow in Machine Learning

Research Fellow in Machine Learning

This project investigates how automatically generated musical soundscapes can enhance access to the aesthetic experience of visual art for people who are blind or have low vision (BLV). Traditional accessibility tools in museums often focus on factual depiction, but can fail to fully convey the emotional and atmospheric qualities that sighted patrons experience naturally. This work may be of particular interest to researchers interested in accessible creativity support tools, designers of multimodal museum technologies and members of the BLV community who are interested in new ways to experience visual art. The core research questions of this work are:

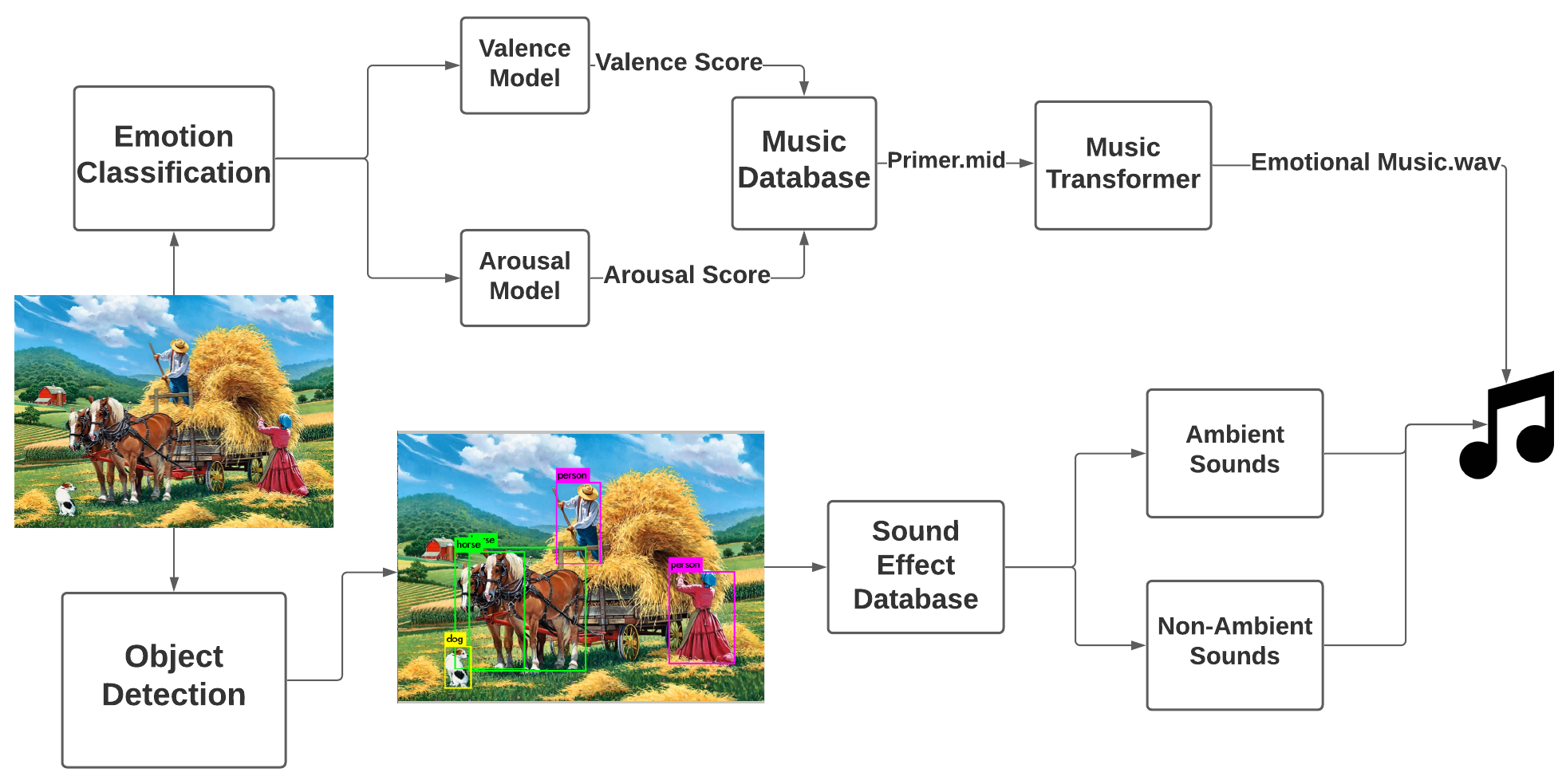

The project began by building a prototype that generates soundscapes from representational paintings in two stages:

Emotion-Based Music Generation: The system first predicts the painting’s perceived emotion using a deep learning classifier trained on the WikiArt emotions dataset, mapped onto the valence–arousal emotion model. Following this, the system selects an emotionally matching MIDI “primer” from an annotated music database and uses Magenta’s Music Transformer to generate new symbolic music that reflects the artwork’s emotional tone

Contextual Sound Effects: To convey contextual information beyond emotion, the system also detects foreground objects using YOLOv3, retrieves corresponding sound effects from a custom database and mixes ambient and non-ambient sounds into the musical output. This combination aims to deliver both Mood/atmosphere (music) and Scene-setting and object awareness (sound effects)

Although this prototype showed early success, its worth adding that the technologies in this approach are now dated and future work should investigate using modern text-to-music models to generate more sophisticated soundscapes from richer image descriptions, rather than relying on the two-stage approach of first predicting emotion and then generating music.

The participant study was designed to evaluate the effectiveness of the generated soundscapes in enhancing the aesthetic experience of BLV users and was held in two stages:

Pilot Study: Contained 33 participants (mostly sighted, 5 low vision) to test the effectiveness of the system in generating soundscapes. Results were generally positive with participants reporting emotional accuracy 60% of the time and accurate foreground sound effects 92% of the time. These results motivated the use of the system in an in-depth study with BLV users.

In-Depth Qualitative Study: Conducted with 10 BLV users (5 blind, 5 low vision) to gain deeper insights into the user experience and the impact of the soundscapes on their aesthetic appreciation of the artwork. Participants took part in semi-structured interviews after interacting with system and discussed the system's emotional impact, usefulness of contextual sounds and preferences for combining soundscapes with traditional accessibility methods. Importantly, the evaluation was not focused on benchmarking the system's performance, but rather explored the subjective experiences of participants to uncover design insights for future implementations.

This project demonstrated that AI generated musical soundscapes of visual art could improve the art viewing experience of BLV patrons with participants noting that musical soundscapes added a more immersive, emotionally engaging layer than description alone and that they helped build a stronger connection to artworks through mood and atmosphere. The project also revealed the following design considerations for future systems aimed at improving visual art accessibility through automated musical soundscapes: