Stephen James Krol

Research Fellow in Machine Learning

Research Fellow in Machine Learning

This project investigates how AI systems can better support creative exploration by combining fine-grained controllability of generative models (via Attribute-Regularised VAE-Diffusion) and exploration of diverse high-quality design alternatives (via Quality-Diversity Search). The overarching goal is to move beyond "black-box" generative systems toward tools that allow artists and designers to explore large creative spaces, control meaningful aesthetic attributes, and discover multiple high-quality alternatives rather than a single "optimal" output. This work is primarily aimed at creative practitioners, researchers in computational creativity and AI-based Creativity Support Tools, human-AI interaction researchers, and the evolutionary art and generative design communities. The core research questions driving this work are:

The project consists of two complementary system designs that together enable controllable and diverse creative exploration.

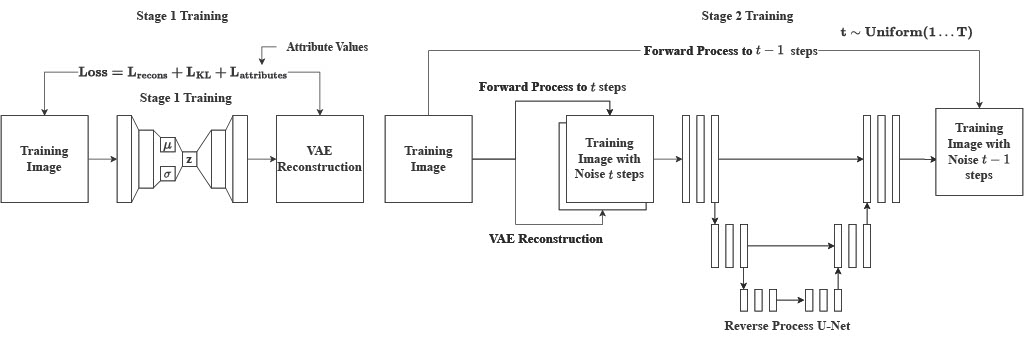

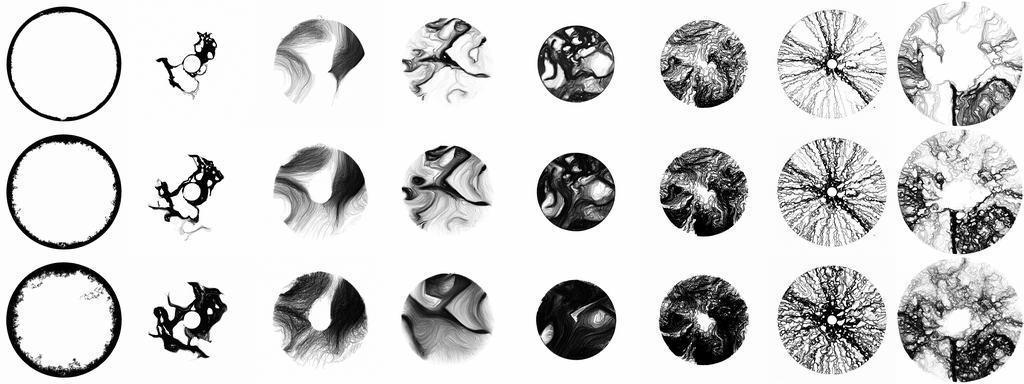

The AR-VAE-Diffusion model combines an Attribute-Regularised Variational Autoencoder (AR-VAE) that embeds interpretable attributes into specific latent dimensions using Attribute-Based Latent Space Regularisation (ALSR), with a Denoising Diffusion Probabilistic Model (DDPM) that enhances generative quality to produce detailed, high-fidelity images. Training occurs in two stages: first training the AR-VAE with attribute regularisation, then training the diffusion model conditioned on VAE reconstructions. During inference, a latent vector is sampled, selected dimensions are adjusted, the modified latent vector is decoded, and diffusion refinement produces a high-quality final image. Two complex abstract datasets were used: the Curl Noise Dataset (agent-based generative system producing abstract line drawings, ≈68k images) with attributes for Pixel Density and Generation Size, and the Kaggle Abstract Art Dataset (abstract paintings, ≈28k images) with attributes for Colour Diversity and Structural Complexity. The system was evaluated using disentanglement metrics (Interpretability, MIG, Modularity, SAP, Spearman correlation) and visual inspection, finding that AR-VAE improved interpretability and disentanglement over Beta-VAE, while diffusion significantly improved visual quality while preserving control.

The second component introduces a Quality-Diversity Search (QDS) framework applied to a generative line drawing system, consisting of four stages: Generation, Evaluation (Fitness + Diversity), Classification, and Breeding. The agent-based line drawing model has 14 genetic parameters that fully determine visual behaviour. For fitness evaluation, a participant study was conducted where 255 randomly generated images were ranked by aesthetic preference, revealing that structural complexity (a compression-based metric) correlated strongly (r = 0.72) with preference, which became the fitness function. For diversity evaluation, a VAE was trained on 40,000 generated images, latent vectors were extracted and reduced via PCA and t-SNE to 2D, and K-means clustering defined prototypical design families. Diversity was measured as the ratio of populated clusters.

The design space was partitioned into clusters, with each cluster maintaining its elite (highest fitness), and mutation-based breeding exploring nearby space. Comparison between QD Search and a Fitness-only Genetic Algorithm showed that QD Search increased both fitness and diversity, while fitness-only search converged to similar-looking high-density circular patterns.

This work demonstrates that effective AI-based creativity support tools require interpretable latent spaces, attribute-level control, mechanisms for diversity-aware exploration, and balance between agency and user direction. The project reframes generative AI not as an optimiser of single outputs, but as a tool for controlled, diverse creative discovery. The following key findings emerged: