Stephen James Krol

Research Fellow in Machine Learning

Research Fellow in Machine Learning

This project investigates the design and evaluation of a deep learning-based music variation system that supports creative ownership and active co-creation rather than replacing the musician. The central focus is preserving personal ownership and artistic identity in AI-assisted music composition. Rather than generating complete songs from prompts, this work explores how AI can extend and vary a musician's own ideas through fine-grained control over musical attributes using masked prediction with MusicBERT. The primary target users are practising musicians, including songwriters, producers, jazz musicians, composers, and instrumentalists. The core research questions driving this work are:

Rhapsody Refiner uses MusicBERT, a bidirectional transformer for symbolic music understanding, combined with masked token prediction to enable controllable music variation.

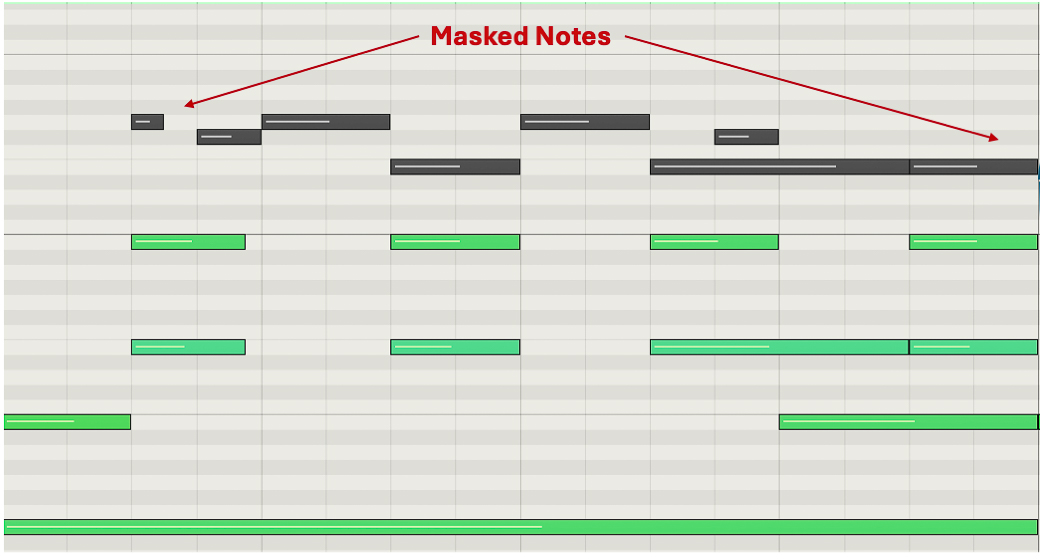

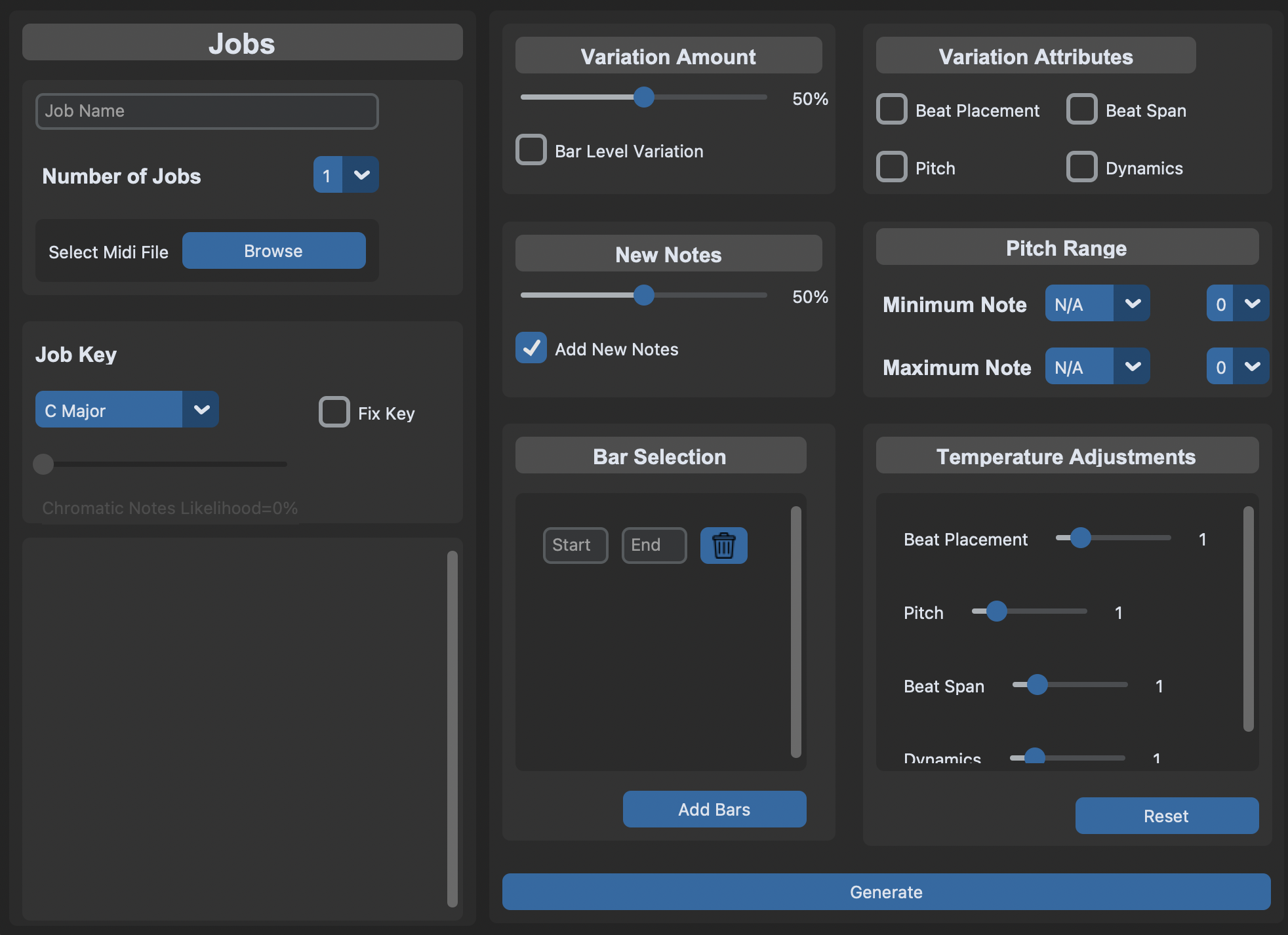

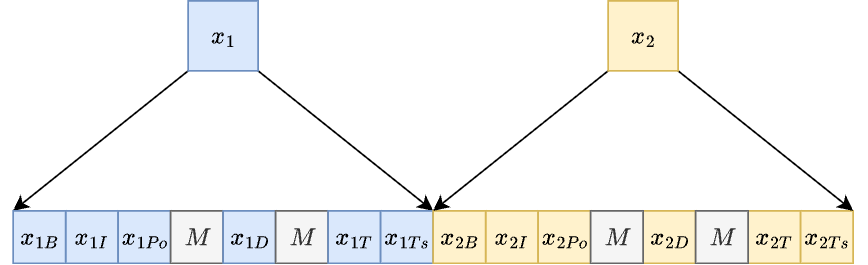

The core architecture consists of four key technical features. First, Octuple Encoding represents each MIDI note with 8 attributes (bar, instrument, pitch, position, velocity, duration, time signature, key), enabling attribute-level control without entanglement. Second, Masked Token Prediction allows the system to mask selected note attributes and predict them autoregressively using MusicBERT, with masking governed by a variation parameter determining how many notes are modified. Third, Strict Attribute Control enables users to selectively vary pitch, beat placement, beat span (duration), and dynamics, with only explicitly selected attributes being masked and predicted. Finally, Logit Filtering ensures correct token types, optional pitch range constraints, and optional key-fixing with soft scaling. The system also includes a New Notes Function allowing users to add new notes proportionally across bars before prediction.

The variation process begins with users uploading MIDI files and selecting variation amount (0-100%), attributes to vary, bar ranges, pitch range or key constraints, and temperature per attribute. The system then uniformly samples notes to mask, masks only selected attributes, iteratively predicts masked tokens via MusicBERT, applies probability filtering and temperature scaling, and outputs a modified MIDI file. Crucially, the system never generates a full song from scratch; it requires an initial musical phrase from the user. For technical details of the implementation see related reading at the end of the page.

Eight practising musicians with diverse backgrounds (songwriters, jazz musicians, producers) participated in a four-week ecological evaluation. Participants received software and a tutorial, were asked to compose a song using Rhapsody Refiner, and were encouraged to use the system consistently. They kept reflective journals while system logs recorded usage, and post-study semi-structured interviews were conducted. Data was analysed via inductive thematic analysis, prioritising ecological validity over lab control.

The evaluation revealed important insights about AI systems for creative practice. AI systems for creative practice should require effort, preserve authorship, and function as ideation partners rather than autonomous creators. The following key findings emerged: